Nvidia has announced plans to invest up to $100 billion in OpenAI, marking what both companies describe as the largest AI infrastructure partnership in history. The deal, structured as a letter of intent, would see Nvidia acquire non-voting shares in the ChatGPT maker while supplying the advanced processors needed to power OpenAI's expanding network of data centers.

The investment will be deployed progressively, with $10 billion tranches released as each gigawatt of computing capacity comes online. The first phase is targeted for the second half of 2026, built on Nvidia's next-generation Vera Rubin chip platform.

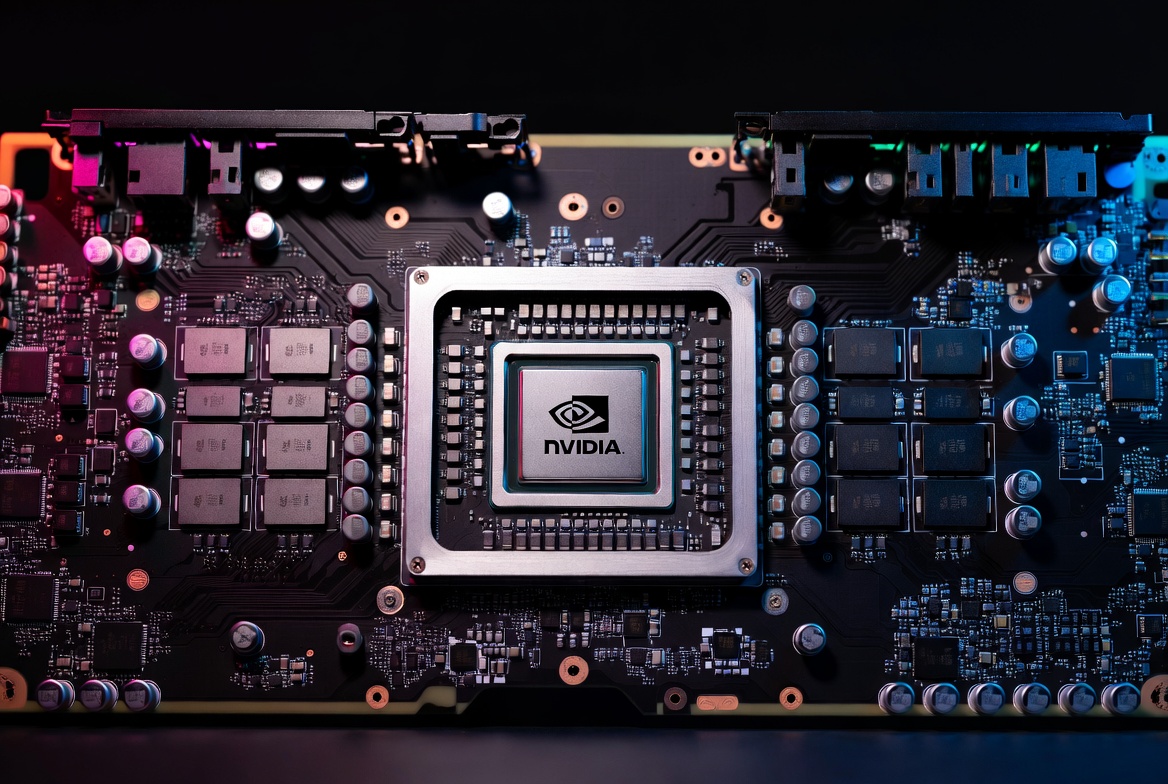

For Nvidia, the deal locks in its most important customer at a time when competitors — including Google, Amazon, and OpenAI itself — are investing heavily in developing their own AI chips. For OpenAI, it secures access to the most advanced processors on the market and the capital to deploy them at an unprecedented scale.

The Scale of What's Being Built

The partnership calls for OpenAI to build and deploy at least 10 gigawatts of Nvidia-powered AI data centers. To put that in perspective, Nvidia CEO Jensen Huang told CNBC that 10 gigawatts translates to roughly 4 to 5 million graphics processing units — about what the company expects to ship in all of 2025 and twice as much as it shipped the year prior.

According to Huang, each gigawatt of AI data center capacity costs between $50 billion and $60 billion to build, with approximately $35 billion of that going toward Nvidia chips and systems. At full buildout, analysts estimate the project could generate as much as $500 billion in revenue for the chipmaker.

"Nvidia and OpenAI have pushed each other for a decade, from the first DGX supercomputer to the breakthrough of ChatGPT," Huang said in a joint statement. "This investment and infrastructure partnership mark the next leap forward — deploying 10 gigawatts to power the next era of intelligence."

OpenAI CEO Sam Altman framed the deal in similarly sweeping terms: "Compute infrastructure will be the basis for the economy of the future, and we will utilize what we're building with Nvidia to both create new AI breakthroughs and empower people and businesses with them at scale."

Part of a Much Larger Buildout

The Nvidia partnership does not exist in isolation. It sits alongside the Stargate initiative, a separate $500 billion infrastructure program backed by OpenAI, Oracle, and SoftBank that was first announced at the White House in January 2025 alongside President Donald Trump.

The flagship Stargate site in Abilene, Texas — roughly 180 miles west of Dallas — is already operational, with Oracle Cloud infrastructure and racks of Nvidia chips running training and inference workloads. Five additional U.S. data center sites were announced the same week as the Nvidia deal, bringing Stargate's total planned capacity to nearly 7 gigawatts and over $400 billion in committed investment.

The new sites were selected from more than 300 proposals across 30 states, with locations in Shackelford County, Texas; Doña Ana County, New Mexico; Lordstown, Ohio; Milam County, Texas; and an additional Midwest site yet to be disclosed.

Going forward, all of OpenAI's infrastructure projects will fall under the Stargate umbrella, according to OpenAI CFO Sarah Friar, who described the pace of construction as historic. "No one in the history of man built data centers this fast," Friar told CNBC from the Abilene site.

A Web of Strategic Relationships

The deal also highlights the increasingly complex web of partnerships that now defines the AI industry. Microsoft, OpenAI's earliest major backer, maintains a strategic partnership providing cloud services through Azure. Oracle has separately agreed to provide $300 billion in computing infrastructure over five years. And SoftBank is contributing financing and energy development expertise.

Nvidia, meanwhile, has been on an investment spree of its own. In the same month, the chipmaker committed $5 billion to a joint venture with Intel to co-develop data center and PC chips, and invested close to $700 million in U.K. data center startup Nscale. Nvidia was also among several major U.S. tech firms to announce significant investment in the U.K. during President Trump's state visit the previous week.

Matt Britzman, senior equity analyst at Hargreaves Lansdown, noted that the partnership cements Nvidia's position at the center of the AI buildout. "By locking in OpenAI as a strategic partner and co-optimizing hardware and software roadmaps, Nvidia is ensuring its GPUs remain the backbone of next-gen AI infrastructure," Britzman said. "The market is clearly big enough for multiple players, but this deal underscores that, when it comes to scale and ecosystem depth, Nvidia is still setting the pace — and raising the stakes for everyone else."

The Circular Funding Question

Not everyone views the arrangement without skepticism. Some analysts have raised concerns about what has been described as a circular funding dynamic: Nvidia invests in OpenAI, and OpenAI uses the capital to buy Nvidia's chips.

"Nvidia invests $100 billion in OpenAI, which then OpenAI turns back and gives it back to Nvidia," Bryn Talkington, managing partner at Requisite Capital Management, told CNBC. "I feel like this is going to be very virtuous for Jensen."

The structure is not unlike arrangements seen across the broader AI ecosystem, where chip suppliers, cloud providers, and model developers hold overlapping financial stakes in one another. OpenAI has also partnered with Nvidia rival AMD, agreeing to deploy 6 gigawatts of AMD's Instinct GPUs — a deal that notably includes a binding contract and warrants for up to 160 million shares of AMD stock, a level of formality the Nvidia agreement currently lacks.

As of the announcement, the Nvidia-OpenAI arrangement remains a letter of intent. A definitive agreement has not yet been signed, and Nvidia's quarterly filing includes a standard caveat that "there is no assurance that we will enter into definitive agreements with respect to the OpenAI opportunity or other potential investments."

The Race to Build Custom Chips

OpenAI now has more than 700 million weekly active users and has announced roughly $1.4 trillion in total infrastructure spending commitments across its various partnerships. Altman recently stated that the company expects to end the year on a $20 billion annualized revenue run rate and reach hundreds of billions by 2030.

But the competitive pressure is mounting. Google, Amazon, and Meta are all pouring resources into custom-designed AI chips, aiming to reduce their dependence on Nvidia's hardware. OpenAI itself has been quietly building its own chip design team, exploring a cheaper alternative to the very processors this deal is built around.

For now, Nvidia's ecosystem advantage remains formidable. Its CUDA software platform, which developers have relied on for over a decade, creates a switching cost that rivals have yet to overcome. And with the Vera Rubin platform set to succeed the current Blackwell architecture in 2026, the company is already positioning its next generation of hardware before competitors have caught up with the current one.

As Huang put it: "This is the first 10 gigawatts. I assure you of that."

Disclaimer: This article is for informational purposes only and does not constitute financial advice. Always conduct your own research before making investment decisions.