Amazon Web Services is positioning its custom-built Trainium chip at the centre of the AI industry's infrastructure. Following a $50 billion investment deal with OpenAI announced in February, AWS has opened its Austin chip development laboratory to media for the first time, offering a rare look at the hardware that now underpins some of the world's most widely used AI systems.

The timing is deliberate. As demand for AI computing capacity accelerates, the ability to control chip design and production is becoming a significant strategic advantage for cloud providers.

A Chip That Two AI Giants Now Depend On

Anthropic has used Trainium chips as its primary computing infrastructure since its early days, with the Claude AI system running on over one million Trainium2 chips currently deployed across AWS data centres. The scale of that commitment is now matched by OpenAI, which has agreed to consume two gigawatts of Trainium capacity as part of its expanded cloud agreement with Amazon.

AWS is already struggling to produce the chips fast enough. According to Amazon, 1.4 million Trainium chips are deployed across all three generations, and both Anthropic and Amazon's own Bedrock platform are consuming supply faster than it can be manufactured.

From Training to Inference

Trainium was originally designed to accelerate the training of AI models, the computationally intensive process of building them from large datasets. It is now tuned to handle inference as well, the stage at which a model responds to a user's query in real time. Inference is currently the largest performance bottleneck across the AI industry.

The latest generation, Trainium3, is a 3-nanometer chip produced by TSMC. It is manufactured to run on specialised servers known as Trn3 UltraServers, which Amazon says cost up to 50 per cent less to operate than comparable GPU-based cloud systems. New Neuron networking switches allow every Trainium3 chip within a cluster to communicate directly with every other, reducing latency and improving throughput. AWS also announced a partnership with Cerebras Systems this month, combining Trainium for one stage of the inference process with Cerebras hardware for another, with the aim of delivering significantly faster response times through Amazon Bedrock.

Implications for the Broader Market

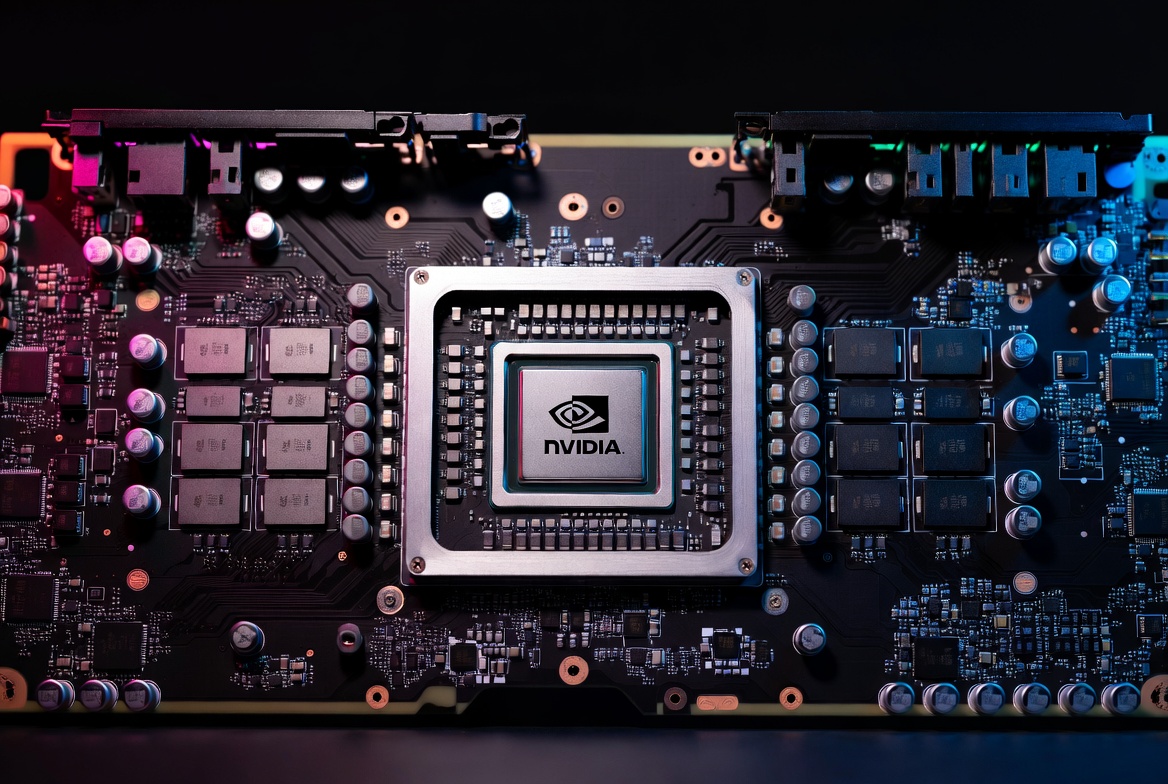

The Trainium strategy reflects a broader shift in how large technology companies approach AI computing. Google has its own Tensor Processing Units, Microsoft has introduced its Maia accelerator, and Meta has developed its own inference chips. The common thread is reducing dependence on Nvidia, whose GPUs remain in high demand and short supply.

Amazon's approach also carries commercial weight. By securing OpenAI as an infrastructure customer alongside its existing relationship with Anthropic, AWS now supports both of the leading independent AI laboratories simultaneously.

What Comes Next

Amazon's engineering team in Austin is already developing Trainium4, the next generation of the chip. According to earlier announcements, it will support Nvidia's NVLink Fusion interconnect standard, making it easier for developers to use Trainium and Nvidia hardware together. Trainium4 delivery is expected to begin in 2027.

The OpenAI deal commits to deploying both Trainium3 and Trainium4 capacity, suggesting the relationship is structured to extend well into the next phase of AI infrastructure development. Whether that arrangement can survive ongoing scrutiny from Microsoft, which has questioned whether the OpenAI-AWS agreement conflicts with its own partnership terms, remains an open question.